In this work we report two successful cases of developing neural networks of spiking neurons for controlling mobile robots. In the first case we use a toy robot, a crocodile, driven by a neural network in-silico. We show that this so-called neuroanimat is capable of detecting internal events of synchronization of network responses to stimuli. In the second example we employ a spiking neural network for building a human-robot interface. Using a bracelet with eight electromyographic sensors we have shown that the interface can faithfully detect myographic signals, classify them according to hand gestures, and send the corresponding commands to the robot. Although the two applications belong to different areas of Neuroscience, they are based on a common approach of neural computations. We note that in both cases besides neural networks there are no additional external algorithms for the decision-making.

INTRODUCTION

Neurons as a main building block of the brain have enormous computational capacity. Therefore, the development of mathematical models of spiking neurons and neural networks on their basis is a promising approach for applied computations (Paugam-Moisy and Bohte, 2009). However, the number of successful attempts of technical implementations remains very limited. Recent studies have shown that networks of spiking neurons can be used for recognition of patterns of different origin (Bichler et al., 2011; Loiselle et al., 2005; Kasabov et al., 2012). In this work we report two successful studies of spiking neural networks. In the first case we use a toy robot, a crocodile, driven by a neural network in-silico. We show that this so-called neuroanimat is capable of detecting internal events of synchronization of network responses to stimuli. In the second example we employ a spiking neural network for building a human-robot interface. Using a bracelet with eight electromyographic sensors we classify hand gestures in real time and use them to control a mobile robot.

METHODS

Neuroanimat. We developed a neuro-simulator, called NeuroNet, which models a network of 400 excitatory and 100 inhibitory Izhikevich-type neurons (Izhikevich, 2004). Topologically the neurons are distributed over nodes in a 2D graph whose edges correspond to couplings between cells. Then, the time delay in spike transmission between neurons is proportional to the distance between the corresponding nodes. Each neuron receives about 30 afferent couplings. The coupling probability decreased with the distance between neurons. The model simulates two types of synaptic plasticity. The short-term plasticity (facilitation and depression) is implemented by varying the transmitter release according to the frequency of presynaptic spikes (Tsodyks et al., 1998). The long-term potentiation is based on spike-timing dependent plasticity (STDP) (Morrison et al., 2008). If a postsynaptic spike follows a presynaptic spike then the coupling strength increases. In the case of inverse spike timings the coupling strength reduces. An ultrasonic distance sensor placed on the robot head provides sensory information to the neural network. The sensor modulates the frequency of square pulses produced by a virtual generator. The output of this generator is fed to an arbitrary part of the network. Finally, the network output controls the robot movements.

Human-robot interface. We developed a hardware-software complex, called MyoClass, for real time recording of EMG signals and recognition of hand gestures for controlling a mobile robot. The recording is accomplished by a bracelet MYO™ Thalmic providing simultaneously eight sEMG signals from the sensors (embedded MYO Thalmic gesture recognition was off). We used nine static hand gestures as motor patterns. During an experiment users performed four series of nine gestures each, selected in random order. For extraction of the discriminating features from sEMG signals we employed the same neuronal model as in the neuroanimat approach. The network output was connected to a multilayer artificial neural network for the feature classification. The standard error backpropagation algorithm was used for learning.

Robot platforms. Both robot platforms for the animat (a crocodile) and for testing the human-robot interface (a car) were built from a LEGO kit NXT Mindstorms ®. Communication between all parts has been implemented through a Bluetooth® interface.

RESULTS

Neuroanimat: basic behaviours. We first checked that the neural network in-silico could exhibit all basic properties of an in-vitro neuronal culture such as bursting activity (Wagenaar et al., 2006) and plasticity provoked by external stimuli (Pimashkin et al., 2013). Adaptive structural changes in the network are related to long-term potentiation of the coupling weights. We found that such changes can lead to new emerging functional properties, i.e. to synchronization of the network firing with external stimuli. We revealed two criteria working at the low neuron level that allow distinguishing between synchronous and asynchronous network activities:

a. High frequency (> 8-11 Hz) spiking of neurons; b. Stable phase lag (about 60-70 ms) of fired spikes related to the stimulus onset.

Fig. 1. “Eating” behaviour based onsynchronization phenomenon in the animat. Left and right columns correspond to before and after learning, respectively. The output from the ultrasonic sensor (s) provokes synchronization (red circled) of neurons in the main network (n is a representative neuron). This in turn leads to activation of the phase filter neuron (d1) and later of the frequency filter neuron (d2) and the animat opens the jaws.

To combine both criteria we proposed a neural circuit that includes phase and frequency neuronal filters coupled in series. Then, the filter output passes through a neuron-detector, which fires spikes in case of synchronization of the network activity with the stimulus. The phase filter employs axonal delays in two inhibitory neurons included between the stimulated part of the network and the neuron-detector. The first neuron receives input through the geometrically shortest path and thus suppresses excitatory spikes in the time range [20-60] ms after the stimulus onset. The second neuron placed at a distance from the detector suppresses the excitation in the range [70-120] ms. Thus, these neurons strongly inhibit all spikes at the detector except those falling into the range [60-70] ms. The frequency filter relies on the effect of presynaptic facilitation in the framework of short-term synaptic plasticity. The filter parameters have been tuned in such a way that the amount of neurotransmitter release increased for series of presynaptic spikes coming at rates higher than 8 Hz. Thus, the output spikes are generated for high frequency activity of the presynaptic neuron only. Spontaneous activity in the neural network eventually leads to an arbitrary movement of the robot. Then, in case of the presence of an object in the sensory field of the robot, its sensory system generates an output that innervates the neural network. This in turn may lead to a strong increase of the motor activity. The combination of spontaneous and evoked activities in the neural network may lead to the behaviour of searching for a target. Even in the absence of any object in the immediate neighbourhood, the animat from time to time begins moving and “looking” for objects or walls in the room. In case of event synchronization we observed “eating” behaviour (Fig. 1). At high frequency synchronization neuronal spikes pass the phase and frequency filters, which leads to activation of moto-neurons driving quick opening and closing of the jaws.

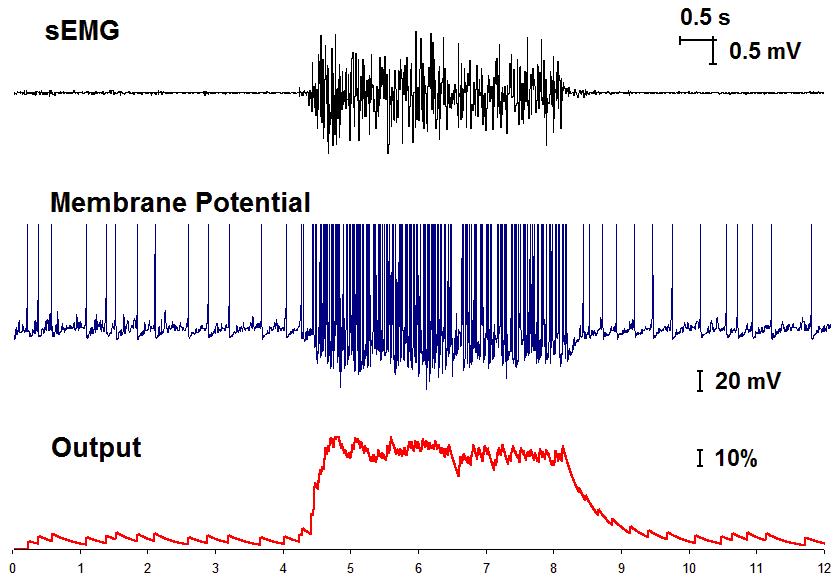

Spiking neurons in human-robot interface. The myographic bracelet provides simultaneously eight sEMG signals. Then, the purpose of the neural network is to extract the most discriminative features from these signals in such a way that the artificial neural network could easily classify them according to the gestures made by hand. Spiking neurons, acting as sensory neurons, receive myographic signals from the bracelet and produce some output spikes. We consider the output synaptic signal evaluated in the framework of the Tsodycs-Markram model as continuously changing feature. Then, we can sample this variable at discrete time instants. Figure 2 shows a representative example of an sEMG signal (top), the transmembrane potential of the spiking sensory neuron (middle) and its output (bottom). During experiments we tuned the parameters of spiking neurons to ensure high accuracy of the classifier, comparable with the use of classic sEMG feature as the root mean square value. For ten subjects (25-56 years old) the classifier accuracy was 92.3±4.2%. We then tested the human-robot interface in real time.

The user controlled the mobile robot using hand gestures. Every recognized gesture (except “rest”) was associated with an instruction of movement of the robot: “drive”, “reverse”, “forward right”, “forward left”, “reverse right”, “reverse left”, “stop”, and “fire”. Our results show that all users after 3-10 trials managed to control fluently the robot.

Fig. 2. A representative example of processing of an EMG signal by a spiking sensory neuron.

DISCUSSION

In this work we reported two successful cases of developing neural networks of spiking neurons for controlling mobile robots. In the first case the neural network works autonomously as a “brain” of an animat. We have shown that it is able to learn from the environment and to reproduce basic behaviour of advancing towards an object and “eating”. In the second case the neural network has been used as a processor for human-robot interface. We have shown that the interface can faithfully detect myographic signals, classify them according to hand gestures, and send the corresponding commands to the robot. Although the two applications belong to different areas of the Control Theory and applied Neuroscience, they are based on a common approach of neural computations. We note that in both cases besides neural networks there are no additional external algorithms for the decision-making.Please, make your references list in accordance with the example below (please, notice that it is represented in alphabetical order). Do not force the "References" section to start on a new page.

| Attachment | Size |

|---|---|

| 563.34 KB |

This work was supported by the Russian Science Foundation project 15-12-10018 (Sections 1, 2.1, 3.1 and 4) and project 14-19-01381 (Sections 2.2, 2.3, and 3.2).

Bichler, O., et al., 2011. Unsupervised features extraction from asynchronous silicon retina through spike-timing-dependent plasticity. Proc. of IJCNN, pp. 859-866.

Izhikevich, E.M., 2004. Which model to use for cortical spiking neurons? IEEE Trans. Neural Netw., 15, 1063-1070.

Kasabov, N., et al., 2011. On-line spatio- and spectro-temporal pattern recognition with evolving spiking neural networks utilising integrated rank oder- and spike-time learning. Neural Networks.

Loiselle, S. et al., 2005. Exploration of rank order coding with spiking neural networks for speech recognition. In Proc. Int. Joint Conf. on Neural Networks, 2076–2080.

Morrison, A., Diesmann, M., Gerstner W., 2008. Phenomenological models of synaptic plasticity based on spike timing. Biol Cybern., 98, 459-478.

Paugam-Moisy, H., Bohte, S.M., 2009. Computing with spiking neuron networks. In: Kok J, Heskes T (eds) Handbook of natural computing. Springer Verlag.

Pimashkin, A. et al., 2013. Adaptive enhancement of learning protocol in hippocampal cultured networks grown on multielectrode arrays. Frontiers in Neural Circuits.

Tsodyks M., Pawelzik, K., Markram, H.. 1998. Neural network with dynamic synapses. Neural Comput., 10, 821–835.

Wagenaar, D.A., Pine, J., Potter, S.M., 2006. An extremely rich repertoire of bursting patterns during the development. of cortical cultures. BMC Neurosci. Art. no. 11.